Primers • GDPval

- Overview

- Benchmark Structure

- Task Design

- Dataset Structure

- Evaluation Methodology

- AutoRaters

- What GDPval Measures

- Reported Results

- Scope and Limitations

- Relation to Other Benchmarks

Overview

OpenAI introduced GDPval as a benchmark for evaluating model performance on realistic occupational tasks rather than narrowly scoped academic or synthetic problems.

The benchmark spans 44 occupations across the nine largest sectors of U.S. GDP, motivating its name. These occupations were selected to cover a broad range of economically relevant work, with tasks intended to reflect real professional workflows rather than benchmark-style abstractions.

A central motivation for GDPval is that many existing evaluations measure component capabilities, such as reasoning, coding, or retrieval, but do not directly evaluate end-to-end performance on work tasks. GDPval is designed to help bridge that gap by evaluating models on tasks that resemble practical assignments professionals perform.

Tasks often require combining several capabilities within a single evaluation instance, including planning, information synthesis, multimodal reasoning, computer use, and deliverable generation. Many tasks are also intentionally long horizon, with some corresponding to assignments estimated to take humans multiple hours to complete.

Unlike traditional benchmarks that rely primarily on exact-match scoring, GDPval uses a combination of expert judgments, structured grading criteria, and automated evaluators to assess output quality.

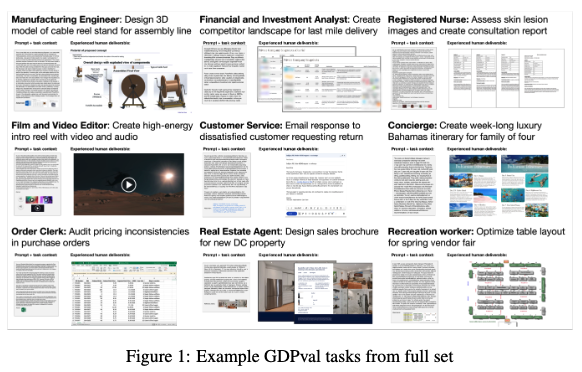

Figure: Example task categories from GDPval.

Benchmark Structure

GDPval contains 1,320 tasks in the full benchmark, with a 220-task gold subset publicly released through Hugging Face.

Rather than organizing evaluation around isolated skills, GDPval is organized around occupational tasks. Each task typically includes:

- a task prompt describing the objective

- supporting materials required to perform the task

- explicit deliverable requirements

- metadata describing occupation and sector

- evaluation instructions and grading criteria

Supporting artifacts are often integral to task execution and may include:

- spreadsheets

- PDFs

- images

- datasets

- forms

- reference documents

This makes tasks closer to structured work assignments than benchmark questions.

According to the paper, tasks were sourced and validated with domain professionals, with contributors averaging roughly 14 years of experience.

Task Design

A defining characteristic of GDPval is that outputs are evaluated as work products.

Rather than returning a short answer, models may be asked to produce:

- analyses

- reports

- recommendations

- structured plans

- spreadsheets

- multimodal artifacts

Tasks frequently require multi-stage execution. A single task may involve interpreting materials, extracting information, reasoning over constraints, using tools, and assembling a final deliverable.

For example, a released task from the GDPval dataset includes an auditing task requiring spreadsheet-based sample selection and supporting deliverables:

{

"task_id": "83d10b06-26d1-4636-a32c-23f92c57f30b",

"occupation": "Accountants and Auditors",

"sector": "Professional, Scientific, and Technical Services",

"prompt": "...",

"reference_files": [

"Population.xlsx"

],

"deliverable_files": [

"Sample.xlsx",

"sample_distribution.png"

]

}

This illustrates that GDPval tasks can include both input artifacts and expected output artifacts, not just text prompts.

Dataset Structure

The public release on Hugging Face exposes GDPval as structured task instances.

Examples of released fields include:

{

"task_id": ...,

"sector": ...,

"occupation": ...,

"prompt": ...,

"reference_files": ...,

"reference_file_hf_uris": ...,

"deliverable_text": ...,

"deliverable_files": ...

}

This schema supports both benchmarking and analysis across occupations, sectors, and task types.

Example occupational task types represented in the benchmark include:

examples = [

"Audit sampling with spreadsheets",

"Support transcript analysis",

"Stage plot generation",

"Operational planning tasks",

]

The benchmark is also mapped to occupational activities using U.S. Bureau of Labor Statistics work activity coverage, described in the paper, which is part of how task coverage was constructed.

Evaluation Methodology

Because many tasks do not have a single correct answer, GDPval does not rely solely on conventional benchmark accuracy.

Evaluation combines expert review with comparative judgments. A major component is pairwise preference evaluation, where judges compare candidate outputs and determine which better satisfies task requirements, as described in GDPval.

This is used because many outputs are open-ended deliverables where quality cannot be reduced to exact-match correctness.

Judgments may consider:

- correctness

- completeness

- usefulness

- instruction adherence

- domain appropriateness

- professional quality

Many tasks are also associated with structured grading criteria used to support more systematic evaluation.

Performance is therefore often expressed through comparative win rates rather than simple accuracy.

AutoRaters

A major technical contribution in GDPval is its use of automated evaluators, or autoraters, to scale assessment.

Because expert grading is expensive, the benchmark uses model-based evaluators calibrated against human judgments to score outputs.

These autoraters are used to assess open-ended deliverables through structured comparisons and rubric-guided evaluation, rather than simple correctness checks.

The paper emphasizes two central properties of these evaluators:

- Agreement with expert human judgments

- Scalability for evaluating large numbers of open-ended task outputs

This is important because evaluating realistic work products is itself a difficult problem.

Conceptually, the evaluation pipeline can be thought of as:

task -> model deliverable

-> autorater assessment

-> pairwise or rubric-based judgment

-> benchmark score

In GDPval, evaluation infrastructure is treated as part of benchmark design, not just an auxiliary scoring mechanism.

What GDPval Measures

GDPval is designed to evaluate combinations of capabilities within realistic tasks, including:

- long-horizon reasoning

- planning

- tool use

- multimodal understanding

- professional deliverable generation

Because these are measured jointly within task execution, benchmark performance reflects compound task performance rather than isolated skill scores.

Reported Results

The paper reports that frontier model performance on GDPval has improved over time and that performance is sensitive to additional reasoning effort and task context.

The benchmark is also used to study:

- model progress over time

- effects of inference scaling

- effects of additional context

- human-AI comparison on occupational tasks

These analyses are a substantial part of the benchmark’s contribution beyond the task set itself.

Scope and Limitations

As with any open-ended benchmark, results should be interpreted with several constraints in mind.

Performance depends partly on evaluator consistency, including autorater-human agreement. Subjective tasks also introduce dependence on rubric coverage and judgment criteria.

Occupational coverage is broad but not exhaustive, and benchmark tasks are sampled instances rather than full representations of occupations.

Prompting, scaffolding, and tool configurations can also materially affect performance.

These considerations are important when comparing results across models.

Relation to Other Benchmarks

GDPval differs from many commonly cited evaluations in the level at which it measures performance.

- MMLU evaluates academic knowledge

- SWE-bench evaluates software issue resolution

- OSWorld evaluates computer-use interaction

- GDPval evaluates broader occupational task performance

Its focus is on realistic work assignments that combine multiple capabilities in a single evaluation.